In today’s digital era, the sheer volume of text generated online has created an increasing demand for intelligent systems that can understand and process multiple languages. Whether it’s for content curation, automated translation, or sentiment analysis, the ability to correctly identify the language of a given text is fundamental. This blog explores a robust multilingual dataset designed for language identification, highlighting its structure, inherent biases, and the myriad ways it powers cutting-edge AI solutions.

Introduction

Language is at the heart of human communication, and with the advent of global connectivity, our digital content has never been more diverse. However, as the variety of languages online increases, so does the complexity of processing and understanding this information. Whether it is identifying the language of a tweet or routing a news article to the appropriate translation service, automated language identification is a critical technology in modern AI.

This blog explores a multilingual dataset curated specifically for language identification. Derived primarily from news headlines and snippets, this dataset is not only valuable for building classifiers but also serves as a benchmark for academic research. In the following sections, we’ll unpack the dataset’s structure, examine potential biases, and discuss practical applications ranging from industrial deployments to educational tutorials.

Dataset Overview

The dataset under discussion is a curated collection of text samples that have been carefully labeled with their corresponding language. Featuring over 9,600 examples, the dataset encompasses ten of the most widely spoken languages in the Indian subcontinent. These languages include Hindi, Urdu, Odia, Tamil, Kannada, Bengali, Gujarati, Malayalam, Marathi, and Punjabi.

At its core, each entry in the dataset is straightforward: a snippet of text and an associated language label. This minimalistic design makes the dataset exceptionally accessible for machine learning practitioners. Its primary focus on news headlines ensures that the text samples are formal and well-structured, a characteristic that can benefit model training by reducing noise often found in more casual text sources.

Moreover, the dataset includes training, validation, and test splits, enabling researchers to build robust models and maintain a clear evaluation strategy. Distributed in efficient file formats like Parquet, the dataset is both lightweight and highly compatible with modern data processing frameworks.

Analyzing the Dataset Structure

A deep dive into the dataset’s structure reveals several features that make it a potent resource for language identification tasks.

Data Composition

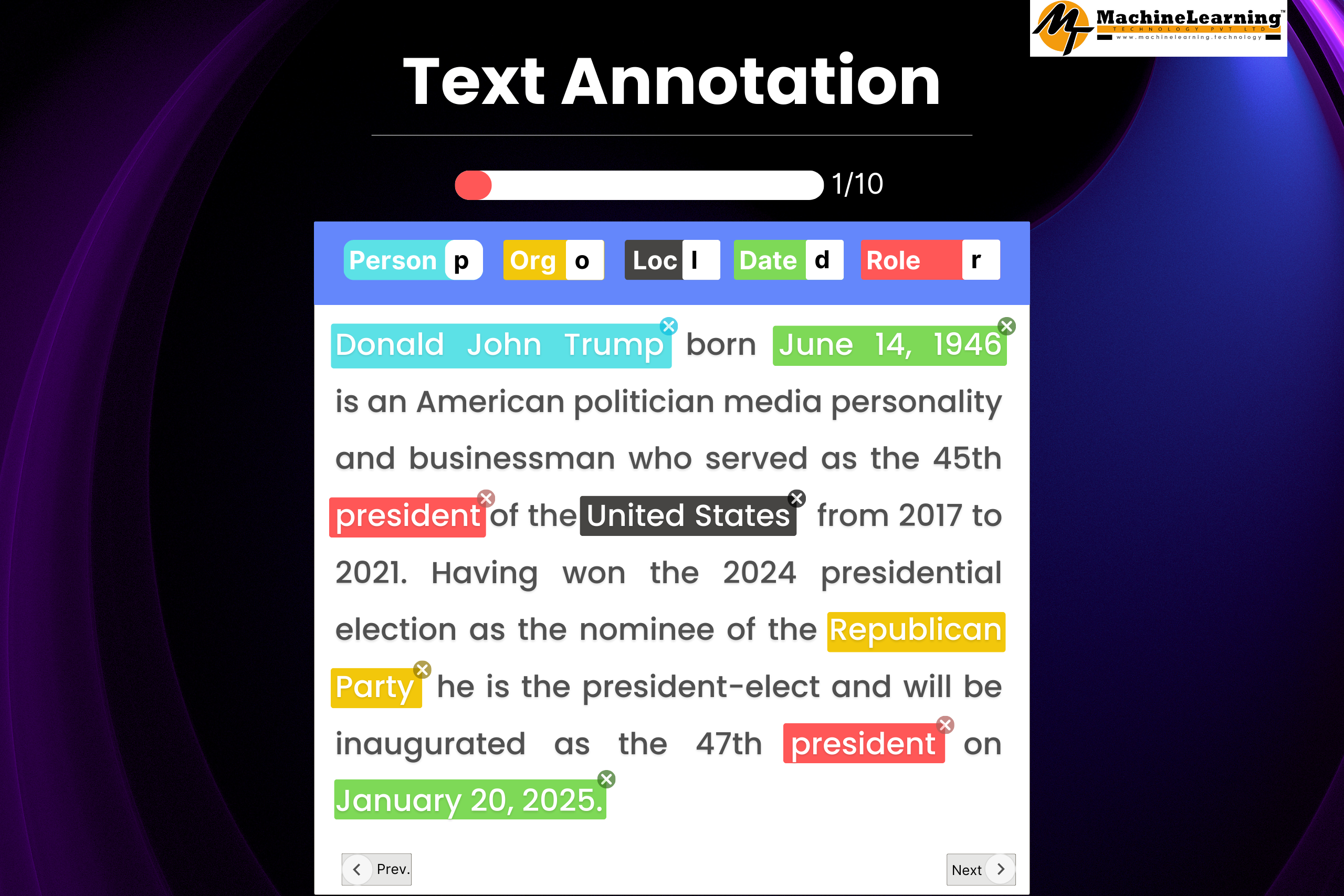

The dataset is built around two essential components:

- Text Field: Each record contains a snippet of text that is typically drawn from news sources. These snippets are concise and reflect the formal tone of journalistic writing. Their uniformity in style and structure minimizes preprocessing overhead, allowing developers to focus on model architecture rather than extensive data cleaning.

- Language Label: Alongside the text, each sample is tagged with a language label corresponding to one of the ten languages. This binary pairing (text and label) is ideal for supervised learning tasks, particularly multi-class classification. The labels not only indicate the language but also indirectly signal the type of script used, which can be a crucial feature for many machine learning models.

File Format and Accessibility

The dataset uses modern, efficient file formats like Parquet. This columnar format offers high speed and compression, enabling quick processing of large datasets. Such formats also integrate seamlessly with popular data processing libraries, including pandas and the Hugging Face Datasets library, making it simple for researchers to load, manipulate, and analyze the data.

The dataset’s open CC BY-SA 4.0 license enhances accessibility, allowing users to freely use, modify, and share the data with proper attribution. In an age where open data is a catalyst for innovation, such licensing encourages both academic research and industrial application.

Dataset Splits

For practical machine learning applications, the dataset is divided into three primary splits:

- Training Set (≈70%): The bulk of the data is allocated for training, ensuring that models have ample examples from each language. This is critical for learning the distinctive features of each language, from vocabulary to syntax and script.

- Validation Set (≈15%): A separate validation set is used to fine-tune models and monitor performance during training. This helps in preventing overfitting and ensures that the model’s learning is generalizable.

- Test Set (≈15%): Finally, an unbiased test set is maintained for the ultimate evaluation of model performance. This split provides a realistic measure of how the model will perform on unseen data, making it an essential component of the training pipeline.

This thoughtful partitioning is standard practice in machine learning and helps in accurately benchmarking the model’s performance across different stages of development.

Exploring Multilingual Coverage

One of the dataset’s standout features is its comprehensive coverage of ten major Indian languages. The languages included are:

- Hindi

- Urdu

- Odia

- Tamil

- Kannada

- Bengali

- Gujarati

- Malayalam

- Marathi

- Punjabi

Most languages in this collection have around 1,000 text samples, offering a balanced foundation for model training. However, Punjabi has only 627 examples, making it slightly underrepresented. This minor imbalance is something practitioners should note, as it might require additional strategies such as oversampling or class weighting to ensure uniform performance across all languages.

The choice to focus on these languages is strategic. They represent a significant portion of the linguistic diversity in the Indian subcontinent, each with its unique script and linguistic nuances. For instance, while Hindi and Marathi both use the Devanagari script, Urdu employs a variant of the Perso-Arabic script, and Tamil has its own distinct script. This variety challenges machine learning models to learn not just vocabulary differences but also orthographic and syntactic nuances, making the dataset invaluable for studying language identification at a deeper level.

Identifying Potential Biases

No dataset is without its challenges, and understanding potential biases is crucial for developing robust models. The following are some of the key biases identified in this multilingual dataset:

Class Imbalance

While the dataset aims for balance, the slight underrepresentation of Punjabi (627 samples compared to approximately 1,000 for the other languages) may lead to models that perform sub optimally on Punjabi texts. You can address this class imbalance by oversampling the minority class, using data augmentation, or applying class weights during model training.

Domain-Specific Bias

The dataset primarily consists of news headlines and snippets. This domain-specific nature introduces a bias toward formal language usage, as news articles typically adhere to a standardized and professional tone. Consequently, models trained on this dataset might perform exceptionally well on news content but could struggle with more informal or colloquial text such as social media posts, blogs, or conversational data. Developers should consider this bias when deploying language identification systems in diverse real-world scenarios.

Script-Based Bias

One of the dataset’s strengths is its coverage of languages with distinct scripts, which can significantly aid in language identification. However, this very feature can also lead to script-based biases. Machine learning models might learn to rely heavily on visual script cues rather than understanding deeper linguistic features. For example, distinguishing between Hindi and Urdu might become a trivial task if the model depends mostly on script differences, but such an approach might falter when encountering transliterated text or mixed-script scenarios.

Coverage Limitations

Although the dataset covers ten major languages, it does not include many regional or lesser-known languages. This focus limits the dataset’s use in fully multilingual settings where texts include a wider range of languages or dialects. Users deploying systems in linguistically diverse environments should consider supplementing this dataset with additional data to cover those gaps.

Real-World Applications

The practical utility of this multilingual dataset extends far beyond academic curiosity. Here are some of the primary applications where the dataset can make a significant impact:

Language Identification Systems

The most straightforward application is building automated language identification systems. Paired with clear language labels, text samples help developers train accurate language classifiers. These are vital for multilingual platforms like news sites, social media, and content systems.

For example, a news aggregator can use the model to auto-tag articles by language, streamlining content organization. Social media platforms can filter content and deliver localized experiences, showing users content in their preferred language.

Enhancing Machine Translation Pipelines

Accurate language detection is a critical first step in machine translation. A robust language identification system ensures the correct translation model is used, boosting translation quality and speed. In multilingual regions, quickly detecting the source language is crucial for translating content into a common language like English.

Integrating such a system into a translation pipeline not only automates the selection of the appropriate translation model but also minimizes errors caused by misidentification, thereby enhancing the overall reliability of the translation service.

Multilingual Content Analysis

For media companies and digital platforms, automatically detecting and categorizing text by language is essential. Accurate tagging enables advanced NLP techniques like sentiment analysis and trend detection per language, helping organizations gain precise insights and tailor strategies for diverse audiences.

For instance, a digital news platform could use language identification to segment its articles, allowing for targeted sentiment analysis that reflects regional trends or public opinion more accurately.

Educational and Research Applications

The dataset also serves as an excellent resource for educational purposes. It offers a clear example of supervised learning—pairing text with language labels—perfect for teaching machine learning basics. Educators can use it to create tutorials, workshops, and interactive notebooks that guide students from data exploration to model evaluation.

The dataset also serves as a benchmark for researchers comparing traditional machine learning with modern deep learning methods. Evaluating models on this dataset reveals the strengths and weaknesses of each approach, encouraging innovation in multilingual NLP.

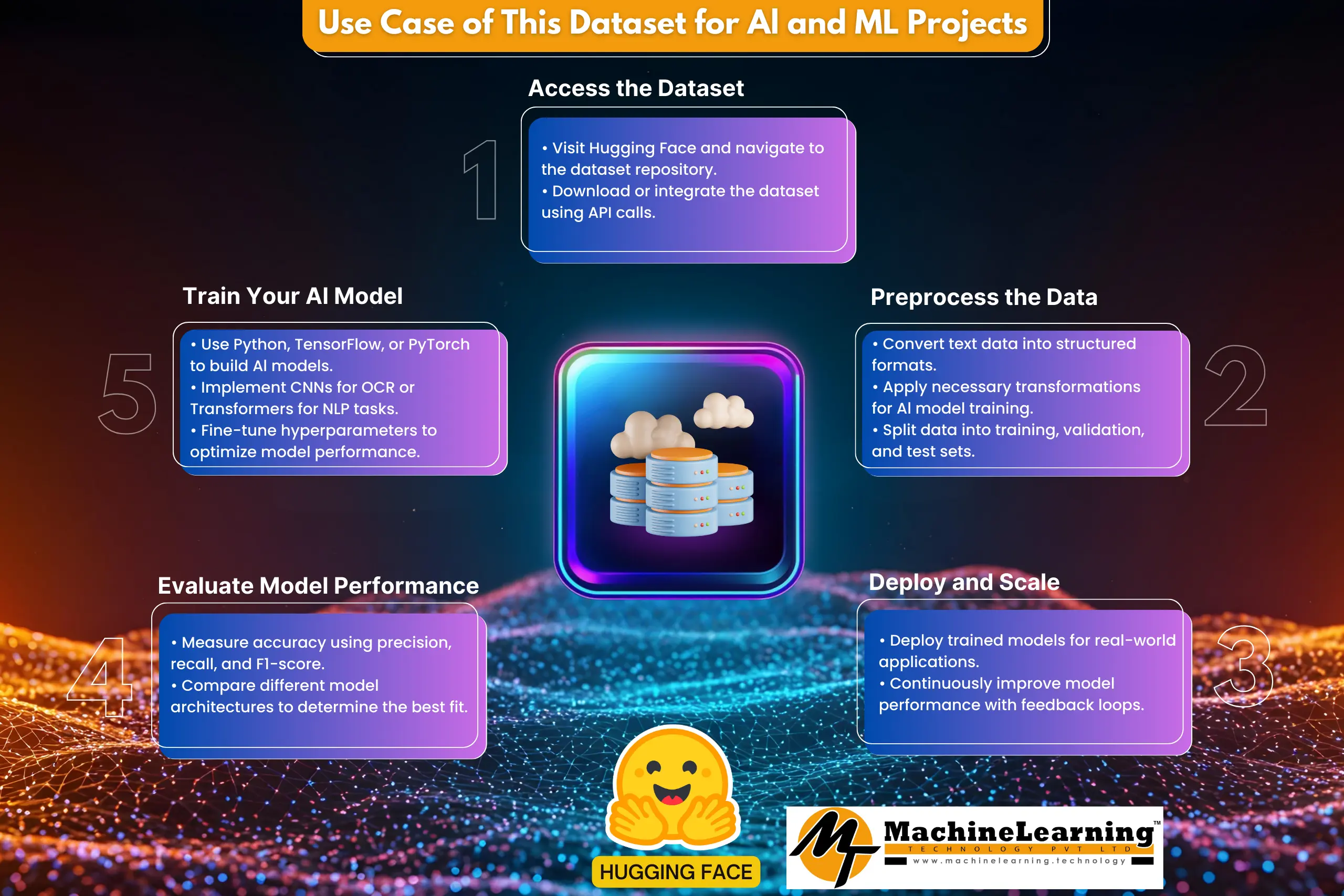

Integrating the Dataset into Your AI Projects

For developers and researchers looking to push the boundaries of multilingual AI, integrating this dataset into your projects can offer numerous benefits:

Developing Comprehensive Tutorials

One of the most engaging ways to share knowledge is by creating detailed, hands-on tutorials. You can develop a multi-part series that covers:

- Data Exploration: How to load and inspect the dataset, visualize language distributions, and understand its structure.

- Preprocessing: Techniques for cleaning text data, tokenization, and addressing class imbalances.

- Model Training: Step-by-step guidance on training a classifier using frameworks like TensorFlow, PyTorch, or scikit-learn.

- Evaluation and Optimization: Strategies to assess model performance using metrics like accuracy, precision, recall, and F1 score, along with tips on fine-tuning the model for better results.

Such tutorials demystify machine learning and equip your audience with the skills to build their own language identification systems.

Creating Interactive Demos

Interactive demos are an excellent way to engage users and illustrate the practical applications of your work. Consider developing a web-based demo where users can input text and instantly see which language is detected. This live demonstration can highlight the model’s ability to handle diverse languages and provide a tangible example of how AI can transform text-processing tasks.

Interactive elements in blog posts or tutorials help connect theory with real-world use, making the learning experience more informative and engaging.

Showcasing Industry Trends

The rise of multilingual AI is not just a technological trend—it’s a cultural shift. As global audiences seek content in their native languages, language identification systems have become essential in content management, marketing, and support. Highlighting these trends with technical content offers a complete view of the dataset’s role in the broader AI landscape.

Articles on inclusive AI, multilingual data challenges, and the future of machine translation resonate with both technical and non-technical audiences.

Conclusion and Future Directions

This multilingual dataset is more than just a set of headlines—it’s a key to unlocking language understanding in the digital age. With over 9,600 samples across ten major languages, it offers a well-structured platform for building robust language identification systems. Its clear design, balanced splits, and open access make it ideal for both beginners and experts.

We’ve explored the dataset’s structure, including its text-label format, storage, and splits. We also identified key biases like class imbalance, domain specificity, and script reliance that developers should keep in mind. Recognizing these challenges is the first step towards developing fairer, more robust AI systems.

Real-world applications of this dataset span a wide range of domains. Whether you’re building language ID systems, improving translation pipelines, or creating educational content, this dataset offers immense value. Its importance grows as AI trends increasingly demand robust multilingual support.

Looking ahead, the insights gained from working with this dataset can pave the way for future innovations. Researchers are encouraged to explore supplementary data sources to address the coverage limitations, particularly for underrepresented languages. By doing so, we can contribute to a more inclusive digital ecosystem—one where language barriers are diminished, and technology is accessible to all.

Thank you for joining us on this exploration from headlines to AI. As we continue to navigate the fascinating world of multilingual NLP, we invite you to experiment, innovate, and share your findings. Stay tuned for more in-depth analyses, tutorials, and the latest trends in artificial intelligence and machine learning.