Machine learning (ML) models require high-quality data for training; until recently, human annotation has been the benchmark for dataset labeling. Even though humans continue to be at the center of every ML operation, the expansion of synthetic ML applications leads to a demand for more data and more complex relationships, giving way to synthetic data—artificially generated datasets that substitute for human annotation.

This blog analyzes the effectiveness of synthetic data and human annotation, evaluating their pros and cons in various ML applications and striving to find the best approach for different use cases.

What is Human Annotation?

Human annotation assigns meaning to characteristics or pieces of information in raw data, such as text, images, videos, or audio. Automated processes are incapable of considering context, nuance, or cultural references, which is crucial for sophisticated tasks like evaluating sentiment or recognizing objects in pictures, sounds, or other forms of data.

Advantages of Human Annotation

High Accuracy and Nuanced Understanding

- Context-Awareness: Humans can interpret context, idioms, sarcasm, and intricate details that an automated system can completely miss. For example, they can understand the sentiment behind a sarcastic tweet or differentiate a cat from a dog in an intricate image.

- Complex Judgments: Human Document analysis or medical diagnosis goes beyond simple cognitive reasoning; it requires human intervention so that the task’s output is meaningful and considers the depth of information in it.

Flexibility Across Diverse Data Types

- Variety of Data: Human annotators can operate seamlessly on various data types, whether it is text, image, audio, or video. Because of this flexibility, they can take on numerous tasks, such as transcribing audio files and annotating videos.

- Adaptability: Teams can easily adapt annotation rules to integrate new data types or evolving needs, making the process usable across industries and research domains.

Robust Quality Control

- Multiple Reviews: Having several annotators tag similar data and then merging their discrepancies is a quality control example that ensures precision and uniformity.

- Error Correction: Human annotators, unlike most automated systems, can capture complex errors that would typically go unnoticed, making the dataset more credible.

- Iterative Feedback: Annotation procedures can benefit from reviews, which allow guideline changes and set the prerequisite for continuous quality improvements over time.

Limitations of Human Annotation

Cost and Time Investment

- Resource-Intensive: Hiring trained annotators or supervising a crowd-sourcing system can be costly. For enormous datasets, the expenses may spiral out of control.

- Slow Turnaround: Manual annotation is, by definition, slower than automated means. Large-scale projects can take several weeks or even months to complete, which is a substantial drawback in time-sensitive cases.

Scalability Challenges

- Limited Throughput: Human annotation is not easily scalable. As datasets grow, finding and training enough annotators becomes exceedingly difficult.

- Project Management Complexity: It becomes very complex to manage numerous trained annotators who must work as a single unit and provide their output without compromising productivity and quality.

Human Bias and Subjectivity

- Inherent Bias: The measures taken by annotators are shaped by their life experiences and their societal and cultural beliefs, as it may be that some biases find their way into the data.

- Variability in Interpretation: The same piece of data can be viewed differently by different annotators, which can be troublesome in the absence of preset standards and quality checks.

- Mitigation Strategies: Annotators use approaches like blind annotation and majority voting to eliminate or reduce bias in the data.

What is Synthetic Data?

Synthetic data is designed using various methods, such as statistical simulations or complex machine learning methods. The most common reason is that it provides a solution where real data can not be used, especially when the data is sensitive, expensive, or rare. By creating synthetic data, organizations erase the need for privacy gatekeeping, data imbalance issues, or large annotated datasets.

Types of Synthetic Data:

Statistical Simulations

- Definition: Data generated by modeling and simulating the statistical properties of real-world phenomena.

- Example: Simulating customer purchasing behavior based on historical trends or generating sensor data based on physical models.

- Applications: Experts use it in fields like finance, engineering, and climate science, where mathematical models effectively capture data dynamics.

AI-Generated Data

- Definition: Data produced using deep learning models such as Generative Adversarial Networks (GANs) or Variational Autoencoders (VAEs).

- Example: Creating realistic synthetic images, text, or audio closely resembling their real counterparts.

- Applications: Widely applied in computer vision, natural language processing, and creative industries to generate training datasets for model development or to augment existing datasets.

Rule-Based Data Generation

- Definition: Data constructed using predefined rules, logical conditions, or deterministic algorithms.

- Example: Generating synthetic logs for cybersecurity testing or creating simulated responses in a chatbot scenario.

- Applications: Developers frequently use it in software testing, scenario simulation, and generating data for controlled experiments with specific conditions.

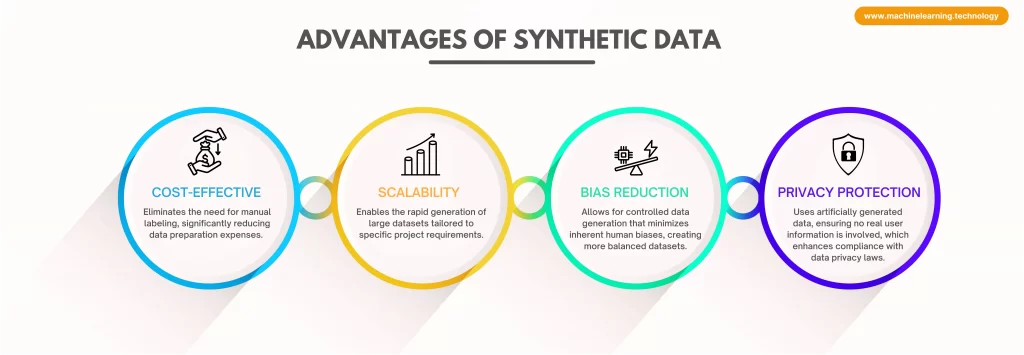

Advantages of Synthetic Data:

- Cost-Effective: Eliminates the need for manual labeling, significantly reducing data preparation expenses.

- Scalability: Enables the rapid generation of large datasets tailored to specific project requirements.

- Bias Reduction: Allows for controlled data generation that minimizes inherent human biases, creating more balanced datasets.

- Privacy Protection: This method generates artificial data and avoids using real user information, helping ensure compliance with data privacy laws.

Limitations of Synthetic Data:

- Limited Realism: It often fails to capture the full complexity and nuances of real-world data, which can lead to oversimplified models.

- Generalization Issues: Models trained solely on synthetic data may not perform optimally on real-world tasks due to a lack of authentic contextual details.

- Requires Expertise: Generating high-quality synthetic data demands advanced knowledge in machine learning and domain-specific techniques, increasing development complexity.

Comparing Human Annotation and Synthetic Data for ML

| FEATURE | HUMAN ANNOTATION | SYNTHETIC DATA |

| Accuracy | High but prone to human error. | It can be high if the generation is well-designed. |

| Scalability | Difficult to scale due to manual effort. | Easily scalable once generation is set up. |

| Cost | Expensive and requires paid annotators. | Cost-effective after initial setup. |

| Bias | Subject to human bias. | It can reduce some biases but may introduce new ones if the models are flawed. |

| Time Efficiency | Slow, especially for large datasets. | Fast to generate large volumes of data. |

| Privacy | Requires handling real user data, raising privacy risks. | Inherently privacy-friendly, uses no actual user data. |

| Generalization | Reflects real-world nuances. | It may not generalize well if it lacks real-world complexity. |

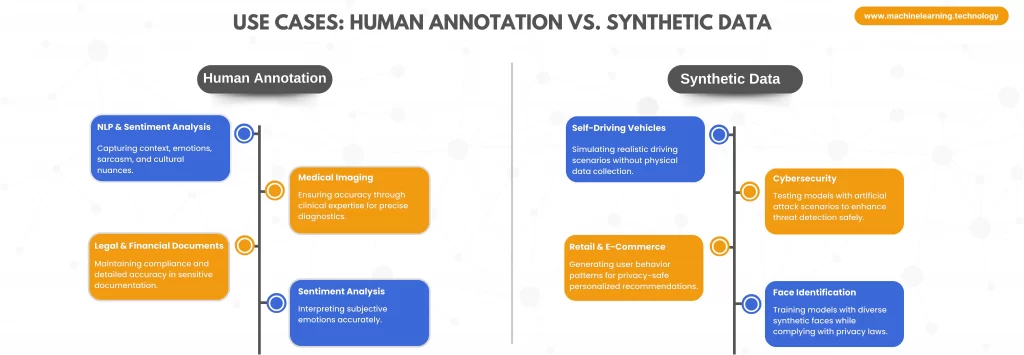

Use Cases: When to Use Human Annotation vs. Synthetic Data

Best Use Cases for Human Annotation:

- Natural Language Processing (NLP): Human annotation is very sensitive in NLP, for instance, covering context, sarcasm, and other cultural elements. This makes it particularly useful for emotion recognition systems. Such processes are useful when building complex multilingual models.

- Medical Imaging: Clinical expert annotators understand the underlying medical concepts and hence help ensure the correct labeling of all components in medical imaging. This is important for maintaining the required accuracy for diagnostic models.

- Legal and Financial Documents: These areas are very sensitive. Human annotators ensure that every detail legally or financially compliant is abided to without missing any constraints set.

- Sentiment Analysis: Human annotation is also essential in sentiment analysis because it interprets subjective lingos and emotional tones to make results more quantifiable.

Best Use Cases for Synthetic Data:

- Self-Driving Vehicles: Autonomous vehicles are enhanced with realistic self-driving technology. Synthetic data is used to create images and sensor inputs needed to simulate different driving scenarios. This reduces the amount of in-person data collection necessary to improve the algorithms.

- Cybersecurity: Security systems are enhanced through the use of synthesized data, which creates artificial attack situations. This allows for secure training and testing of models for improved threat detection without compromising actual systems.

- Retail and E-Commerce: Companies use synthesized datasets of user behavior and shopping patterns to build personalized recommendations without compromising customer privacy.

- Face Identification and Verification: To address privacy concerns in facial recognition systems, developers use synthetic facial datasets with high-quality, diverse images for training. This approach ensures model accuracy without relying on real images, helping meet privacy and data protection standards.

Hybrid Approach: Combining Synthetic Data and Human Annotation

Many ML applications benefit from a hybrid approach where synthetic data is used for bulk training while human annotation refines and validates model performance.

How to Implement a Hybrid Approach:

- Pre-Training with Synthetic Data: Train the initial model with synthetic data to establish patterns.

- Fine-tuning with Human Annotation: Use real-world labeled data to improve accuracy and reduce biases.

- Active Learning: Allow the model to identify uncertain predictions and request human annotation where needed.

- Continuous Data Validation: Use human annotators to check synthetic data for realism and relevance periodically.

Future Trends in Data Annotation and Synthetic Data

- Self-Supervised Learning: Self-supervised learning is a modern and complex model training technique that allows for training without having to label the input data manually. It makes use of available unlabeled data by using inherent structures and patterns within the data, like masking parts of images or even text, which results in powerful predictions and the development of various representations. This method is efficient because not only does it minimize the manpower and time required for annotation, but it also maximizes the output (model development), especially in areas where labels are hard to find.

- Improved Generative Models: Generative modeling is an active research domain that has seen a lot of improvements over time with the introduction of advanced techniques like Generative Adversarial Networks (GANs) and diffusion methods. These modifications make data generation algorithms capture intricate details and features of real data and synthesize complex datasets that have many fields. So, the difference between real and synthetic data is horizontal, which gives the opportunity to increase the use of these algorithms in applications like speech recognition, computer vision, and many more.

- Automated Annotation Tools: The use of AI-assisted annotation platforms is expanding to relieve some of the strain from manual labeling. These platforms use active learning, where the model identifies the most useful information, and humans verify preassigned tags. Organizations can eliminate costs, improve productivity, and channel human effort towards difficult edge cases instead of monotonous routine labeling work.

- Bias-Aware AI Models: Multidisciplinary analyses of biases within datasets and machine learning models are garnering more focus. Thus, systems derived from AI in the future will need to implement features that will recognize and address bias in annotated and autonomously generated data; artificial intelligence can and will make war on artificial intelligence, which will make moderating all types of content much easier. These model-aware biases can flag dataset composition for evaluation and even change their own training procedures to compensate for unbalanced distribution. Developers may provide better, inclusive AI solutions that are capable of functioning in diverse environments by reducing biases.

Conclusion

Synthetic data creation and human annotation both have advantages and disadvantages. While human annotation is more precise and provides deeper contextual understanding, synthetic data is more economical, scalable, and gives better privacy. The optimal position is determined by the particular ML task, the amount of data on hand, and the model’s needs.

Combining synthetic data for coarse-grained pre-training and human annotation for fine-tuning usually works best. As AI grows, both will shift further and will, in turn, sculpt the development of ML models.

Would you like to pursue either synthetic data creation or human annotation for your AI project? Think through your requirements and select the most suitable approach to maximize model efficiency!